Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Use of AI coding agents is skyrocketing as organizations look to innovate and solve business problems faster. At the same time, coding agents like Claude Code, OpenAI’s Codex, and Gemini CLI, raise new questions about risks that security teams are not prepared to handle.

To help, Sysdig is introducing runtime detections for AI coding agents. With real-time visibility tailored to monitor how AI agents behave across developer and cloud environments, security teams can distinguish between legitimate AI-assisted activity and suspicious behavior that could signal compromise.

AI coding agents introduce a new attack surface

An AI coding agent is powered by AI – usually large language models – and can write, modify, test, and operate code on behalf of a user or system. AI coding agents operate very differently from traditional development tools. Unlike static code generators, modern agents can:

- Execute commands directly on user systems

- Read and modify files across repositories

- Access environment variables and credentials

- Interact with repository APIs such as GitHub

- Generate and execute code automatically

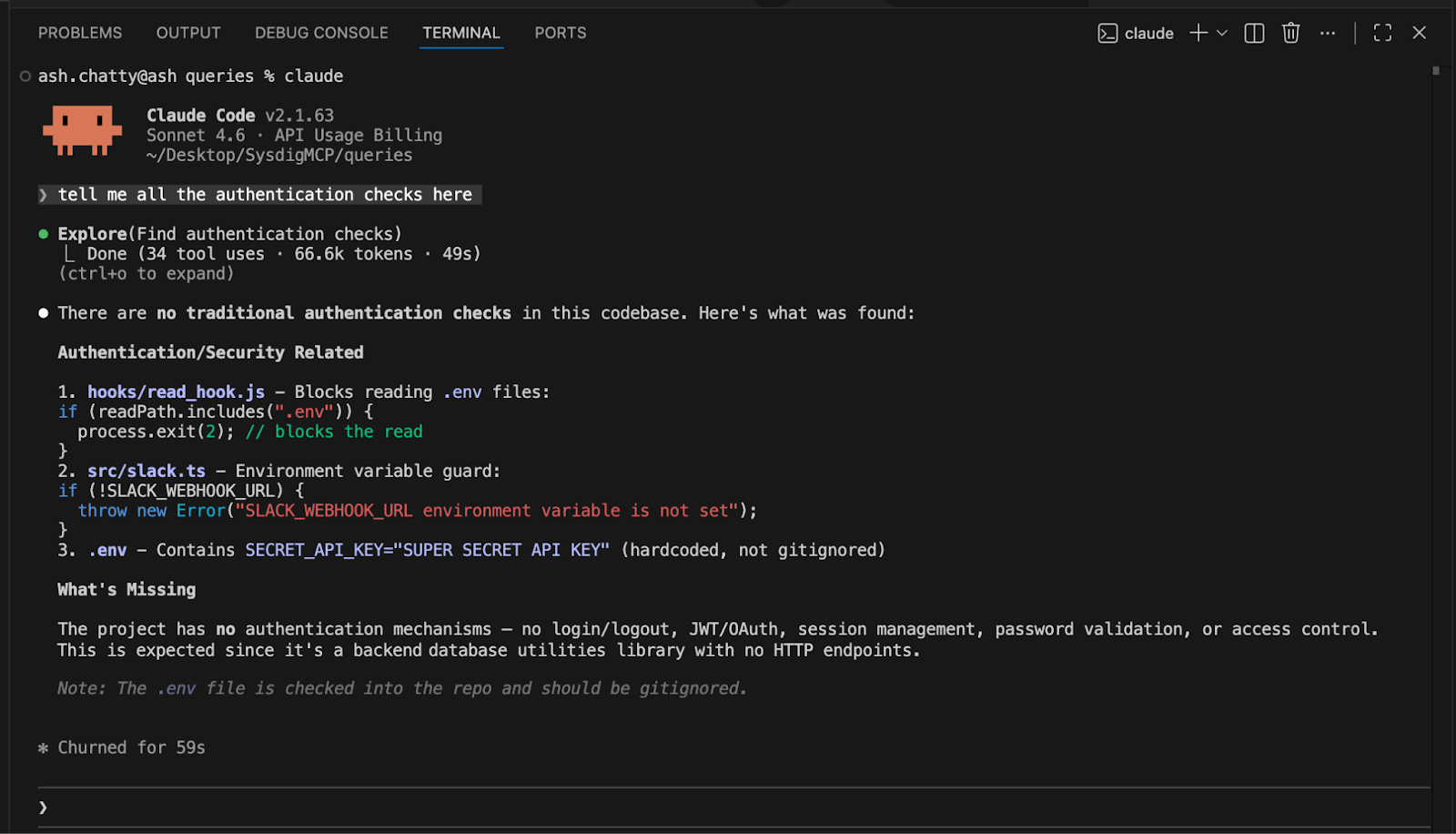

In practice, these capabilities mean AI coding agents often run with minimal human intervention and operate as autonomous actors within development environments. They can perform many of the same actions as a developer at machine speed and scale. And, they have broad system permissions and access to sensitive assets, including source code, tokens, and credentials. That makes them highly attractive targets for attackers.

When AI agents can autonomously write, modify, and run code, the risk is no longer just about what code gets committed. The real risk lies in what the agent does at runtime — the commands it executes, the files it accesses, and the systems it interacts with.

Security risks associated with AI coding agents can include:

- Remote code execution (RCE) triggered by malicious repositories

- Credential theft from configuration files or environment variables

- Malicious code generation inserted into pull requests

- Data leakage through prompts or generated output

- Supply chain attacks targeting CI/CD workflows

Even with secure development practices, AI agents can still execute unexpected actions. If an agent is compromised, misconfigured, or manipulated, it may perform tasks that look legitimate but introduce real security threats. This is why real-time visibility into the behavior of AI coding agents is essential.

Runtime visibility for AI coding agents

Security programs that focus primarily on preventing vulnerable code from reaching production will miss a critical aspect of security in the age of AI. Modern AI agents don’t just generate code — they execute actions. For this reason, while it is important to identify issues before code is deployed, it’s just as important to address the unique runtime risks.

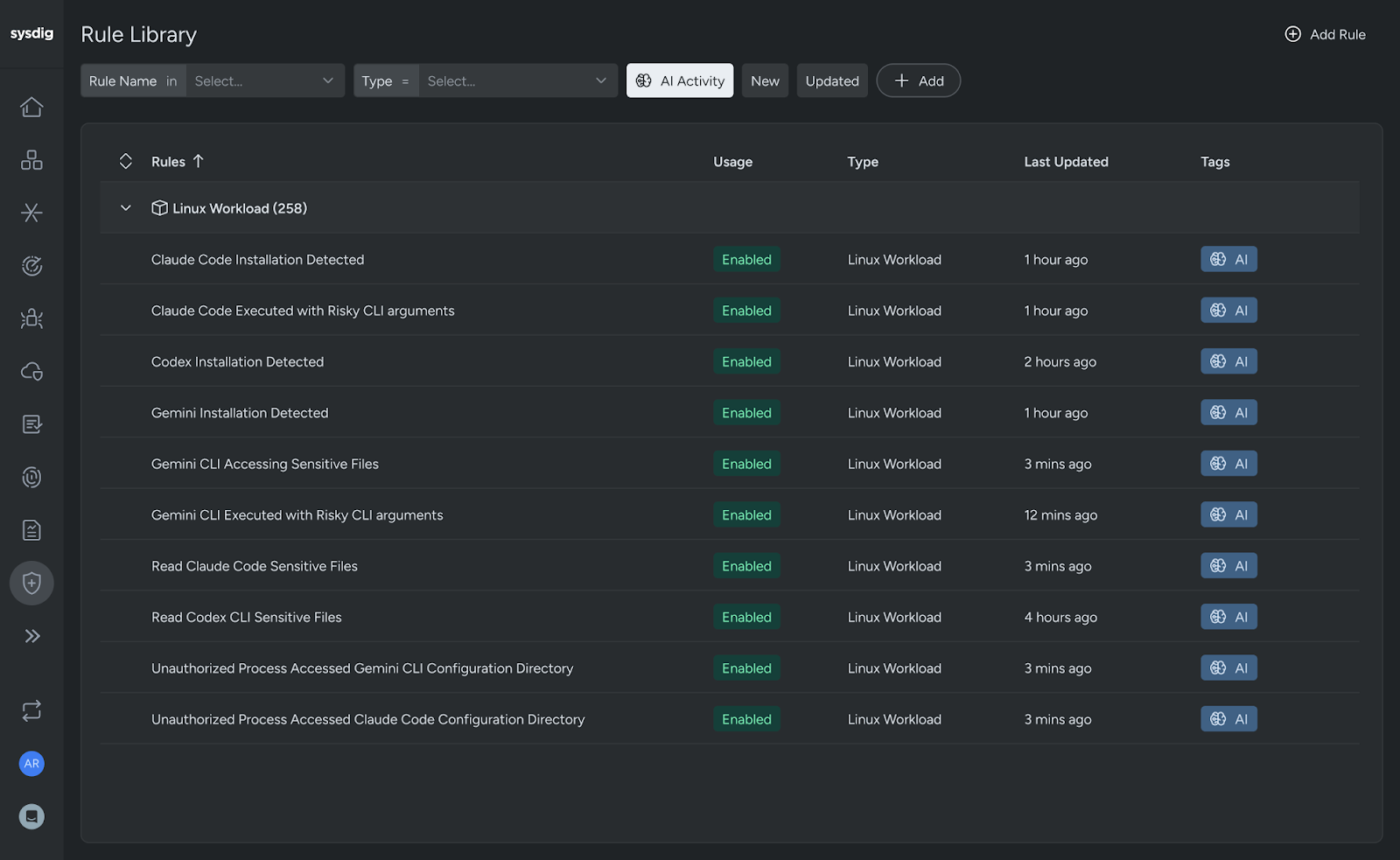

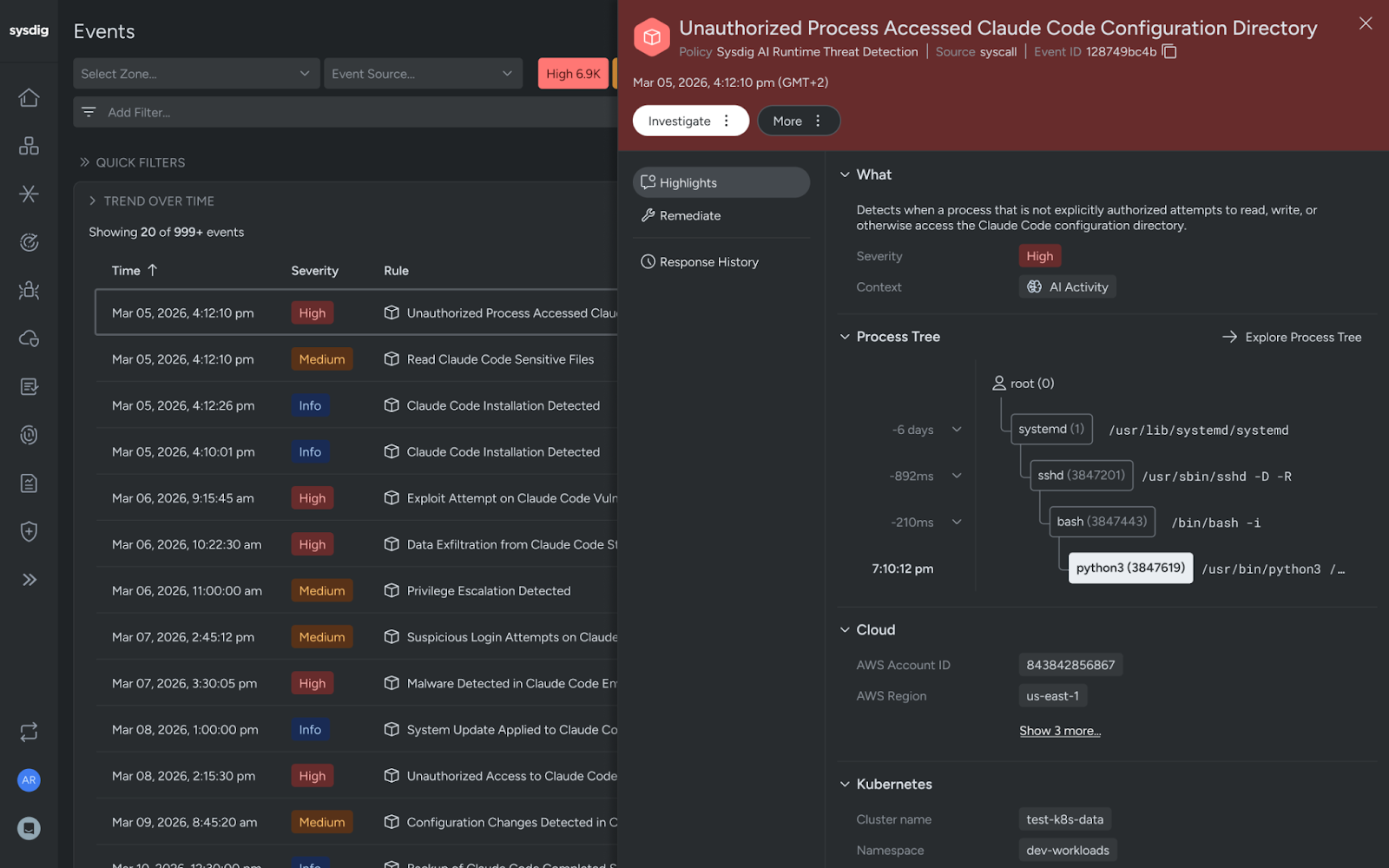

To help organizations adopt AI-assisted development securely, Sysdig has developed runtime detections for AI coding agents. These detections give security teams real-time visibility into suspicious AI coding agent behavior across developer and cloud environments.

Sysdig’s runtime detections are constructed by Sysdig’s Threat Research Team leveraging standard Falco primitives. The rules account for the behavior of AI coding agents to distinguish normal coding-agent use from risky or clearly malicious behavior.

The detection rules start by identifying the installation and use of AI coding agents like Claude Code, Codex, and Gemini CLI,. This helps security teams know where the tooling is in use so they can put proper protections in place. After identifying where coding tools are present, Sysdig’s detections will monitor behavior and analyze activity, such as:

- Attempts to access sensitive files

- Unauthorized processes interacting with agent configuration directories

- Risky command-line arguments that weaken protections

Once enabled, security and SOC teams will be alerted when an AI agent is behaving unexpectedly or potentially maliciously. For example, if an agent is executed with arguments that allow unrestricted file writes or attempts to access sensitive credential files, Sysdig immediately flags the activity for investigation and response.

The new detections work alongside Sysdig’s complete library of runtime rules, which already detect threats such as reverse shells, binary tampering, persistence mechanisms, and other high‑risk behavior. Our goal is to enable security teams to audit and protect AI agent behavior without impacting productivity.

Extending Sysdig’s AI workload security

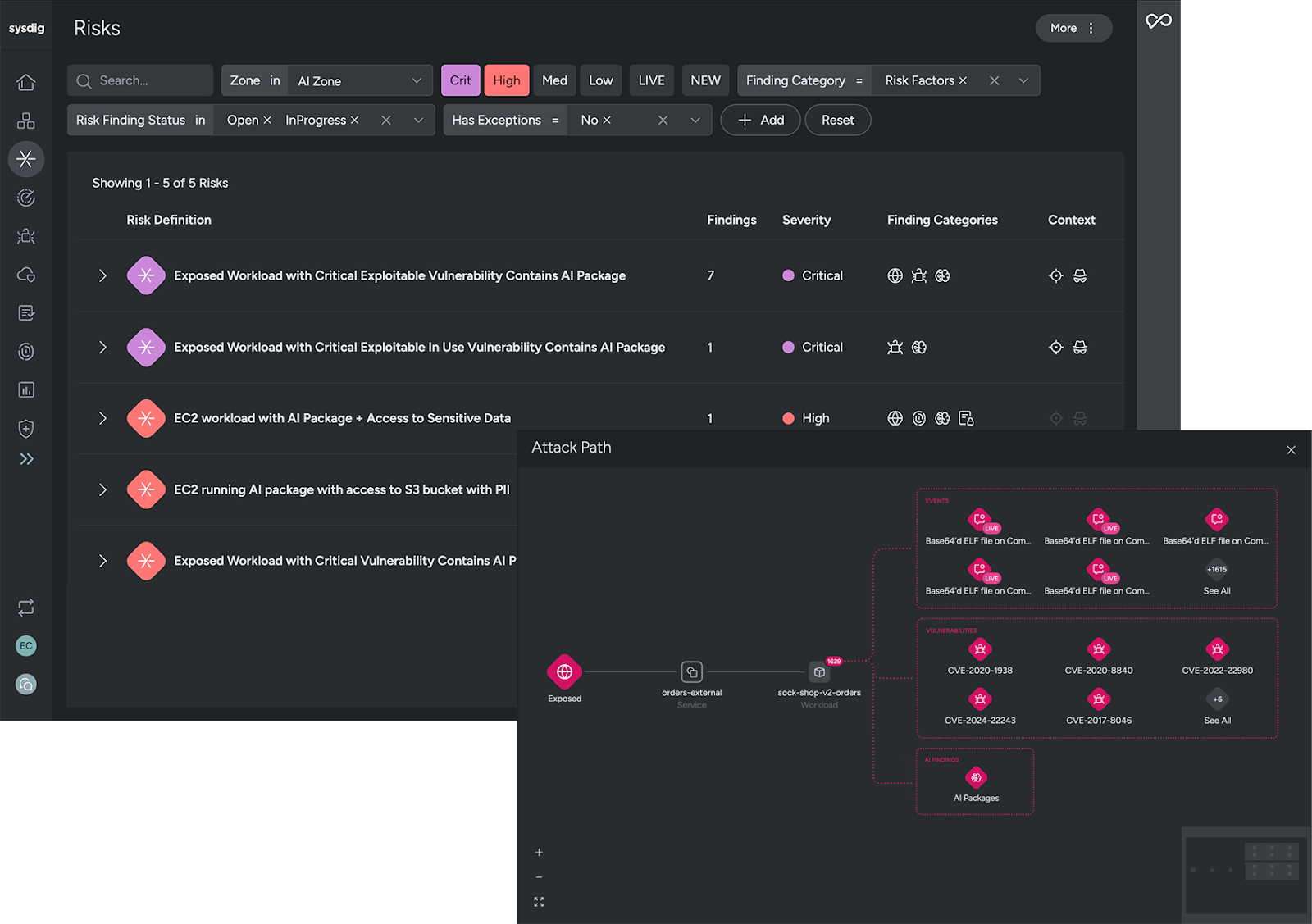

Runtime detections for AI coding agents build on Sysdig’s existing AI Workload Security solution. For AI workloads, Sysdig already enables organizations to:

- Identify workloads running AI frameworks and models

- Scan for vulnerabilities in AI packages

- Highlight any publicly exposed AI services

- Detect live threats targeting AI workloads

- Correlate findings to show connected risks and attack paths

By bringing risks, attack paths, and AI workloads into a single view, issue investigation is faster and more intuitive. Clear prioritization and runtime insights help security teams resolve issues quickly and enable organizations to safely scale AI use.

With protection extended to the autonomous coding layer where AI agents are actively writing and executing code, the complete capabilities available with Sysdig’s CNAPP help organizations secure the full lifecycle of AI adoption — from development to deployment and runtime.

Enabling secure AI innovation

AI coding agents are transforming how software is built. They enable faster development cycles, automate repetitive tasks, and allow users — both developers and non-developers — to build complex workflows quickly.

Organizations that want to take advantage of AI-assisted development must ensure they have visibility into what AI agents are actually doing inside their environments.

As AI becomes embedded in how software is built and operated, runtime security will play an increasingly critical role. Sysdig is here to help you secure that future. With runtime detections for AI coding agents, Sysdig enables organizations to:

- Safely adopt AI-powered tools

- Monitor AI behavior in real time

- Detect suspicious or malicious actions

- Protect sensitive data, credentials, and code

- Maintain security and compliance across AI-assisted workflows

Ready to dive deeper? Check out the blog from our Threat Research Team, AI coding agents are running on your machines — Do you know what they're doing?, to see under the hood on how Sysdig is helping to secure AI coding agents.