Secure and accelerate AI innovation

Visibility and security for AI workloads, agents, and data

Protect AI without putting on the brakes

Safeguard fast-moving AI tools and agents

Automatically identify and secure sanctioned and shadow AI tools in your environment.

Establish guardrails and stay ahead of regulations with clear visibility into the security posture of your AI workloads.

Our purpose-built solution makes AI security easy to implement by teams of any experience level.

Sysdig’s real-time AI cloud defense empowers AI innovation without compromise.

Stay ahead of AI threats with real-time visibility

Defend your AI environments with relevant, actionable insights in real time. Sysdig’s deep, runtime insights help you see everything that’s happening when it happens.

Identify and prioritize AI coding agent risk

Purpose-built runtime detections for AI coding agents help organizations safely adopt autonomous development tools. Threat detection for coding assistants, such as Claude Code, Codex, and Gemini help you identify risky activity in real time.

Investigate and respond in minutes with AI assistance

Sysdig’s agentic AI cloud security analyst, Sysdig Sage, interprets security alerts, surfaces business risk, and suggests next steps, enabling organizations to thoroughly understand and quickly remediate threats.

No compromise security for AI workloads

AI threat visibility

Automatically detect suspicious activities and threats to AI workloads and identify across key solutions such as OpenAI, Amazon Bedrock, Anthropic, Google Vertex AI, IBM watsonx, and TensorFlow.

Risk prioritization

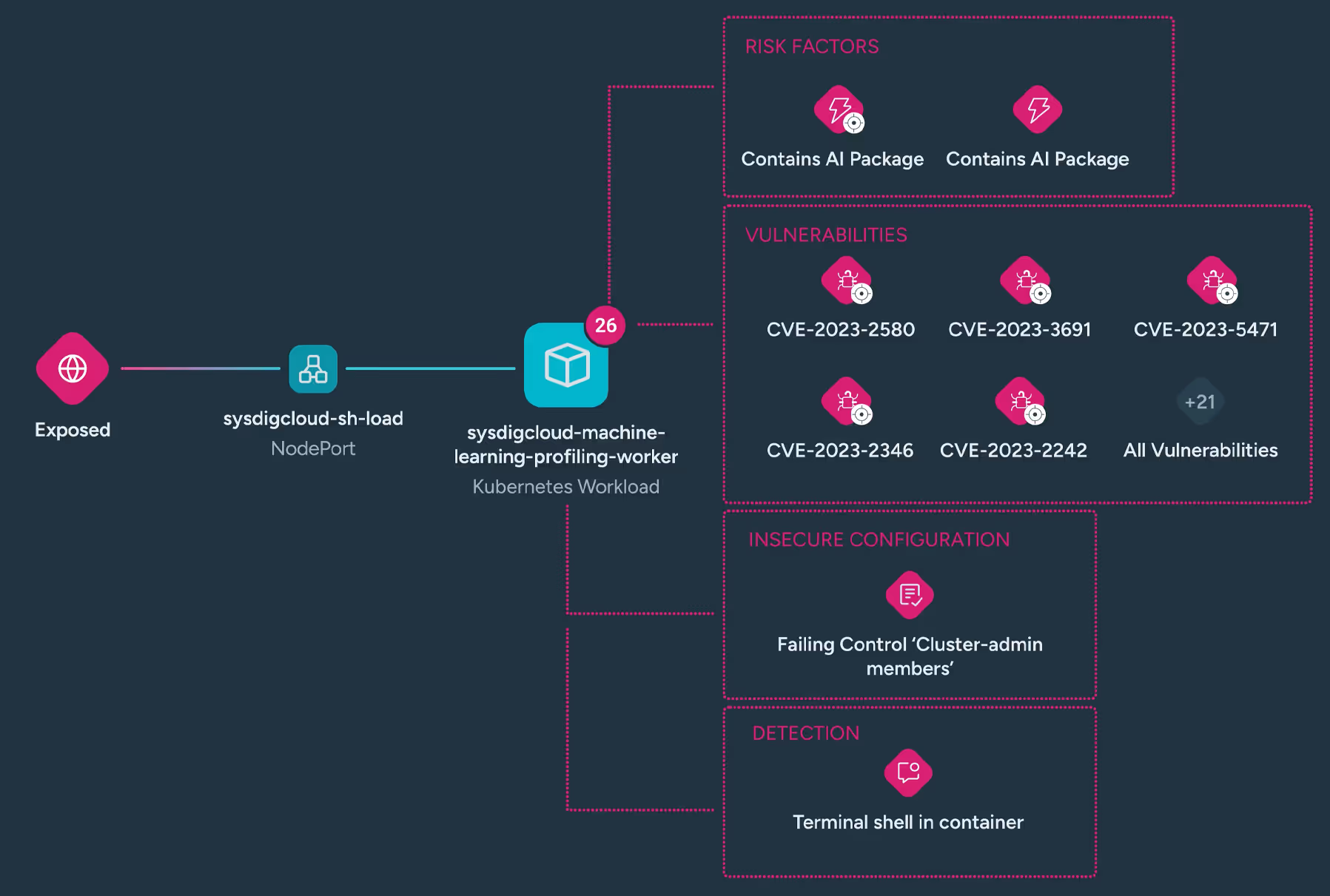

Identify public exposure, vulnerabilities, misconfigurations, and active threats — like shell access or remote file copying — and prioritize the most urgent risks to your AI workloads, tools, and data.

Attack path analysis

Uncover hidden attack paths by correlating AI assets with activity and visualizing risks across interconnected resources. Runtime insights, real-time detections, and response actions help you stop attackers.

Runtime vulnerability exposure

Prioritizes critical vulnerabilities in your AI deployments by leveraging runtime insights to identify the highest-risk AI packages in use, ensuring the most critical vulnerabilities are addressed first.

Your Blueprint to Securing AI Workloads, the Right Way

How Sysdig’s AI workload security works

Sysdig’s AI workload security delivers real-time visibility into active risk across AI and GenAI environments. Support for AI and ML engines like OpenAI, Amazon Bedrock, Anthropic, Google Vertex AI, IBM watsonx, and TensorFlow, and AI coding agents including Claude Code, OpenAI’s Codex, and Gemini CLI, helps teams understand where AI tools are running, and spotlights the risks that require attention.

AI tools have expanded the attack surface and the potential for misuse and data exposure. By continuously analyzing cloud context like public exposure, vulnerabilities in active AI packages, misconfigurations, and suspicious coding agent activity, Sysdig empowers teams to stop potential threats before they escalate.

AI workload security is integrated into Sysdig’s CNAPP, featuring our Cloud Attack Graph. By correlating findings across vulnerabilities, configurations, permissions, and runtime events, you get a unified view that simplifies investigation, shows how risks connect, and clearly highlights attack paths.