What is headless cloud security? [A complete guide]

Headless cloud security is a cloud security architecture that decouples a platform's data and capabilities from its user interface, exposing the platform through programmable interfaces instead. AI agents operate the platform directly through APIs and skills. Humans retain control by setting policy and approving consequential actions.

What created the need for headless cloud security?

Cloud security has been built around dashboards for over a decade. The model assumes a human is always available to triage alerts, correlate signals, and decide what to do next.

That assumption no longer holds.

Here's why:

Cloud attacks used to unfold over days or weeks. Now they don't. With AI in the hands of attackers, the path from initial access to full control can compress into minutes. By the time an alert reaches a human, the attack is often already over.

Which means a model that requires human review at every step can't keep up. The mismatch isn't about better dashboards or faster alerting. It's about who, or what, is operating the security platform.

Then there's where work is happening.

Engineers increasingly operate through AI coding agents. Logging into a separate tool for every task is no longer how the work gets done. Security tooling, by and large, hasn't followed.

The result: Security still lives in dedicated dashboards. But the work it's meant to protect has moved somewhere else. That gap creates friction. It also creates blind spots.

The bottleneck isn't the quality of the dashboard. It's the assumption that a human has to be the one reading it.

What are the core components of a headless cloud security architecture?

A headless cloud security architecture isn't defined by a single technology.

It's defined by how a few specific components work together to expose security capabilities for programmatic, agent-driven consumption, including:

- Extension layer. The surface that makes the platform reachable. Typically MCP servers, APIs, and configuration files that connect platform capabilities to external workflows and ecosystems. Without it, the platform stays locked behind whatever interface the vendor designed.

- Data architecture layer. Where security data is ingested and stored. This can be the security platform itself, or the organization's own data lake. The data this layer holds is what agents reason over, which is why its fidelity directly shapes the quality of decisions agents can make.

- Agentic layer. Where procedural knowledge lives. In practice, this takes the form of agent skills, which are structured packages that tell coding agents how to interact with the platform for specific workflows like vulnerability management, posture remediation, or cloud detection and response.

- Secure control plane. The coordination layer. It serves as a centralized point for coordinating multiple coding agents working cooperatively on larger or more complex tasks, and supports the oversight needed to operate them at scale.

FYI: An MCP server alone doesn't make a platform headless. A platform can add an MCP server on top of a dashboard-first design and expose some capabilities to agents. That's a partial fix. A headless architecture is built differently from the start, with all four layers designed so agents can run the platform directly.

Essentially, headless cloud security works by giving agents the work they handle best and humans the work they handle best. The architecture is built so each side can do its part.

Here's what an actual cloud security workflow looks like in a headless model:

How headless cloud security goes beyond traditional cloud security

Most cloud security platforms produce similar lists of capabilities: Posture management. Threat detection. Vulnerability scanning. Identity context.

What changes with a headless cloud security architecture isn't the capability list. It's what teams can do with those capabilities once the platform is built for programmatic, agent-driven consumption.

Here's what becomes possible:

- Workflows shaped by the organization, not the vendor.

A traditional cloud security platform organizes work around the screens its designers built. A headless platform exposes the underlying capabilities directly. Teams can compose workflows around their own cloud environment, risk model, and processes. The platform adapts to the team. Not the other way around.

- Cloud security operating where work already happens.

Engineers and security teams increasingly work through AI coding agents, chat tools, and IDEs. A headless platform can be reached from inside those environments through APIs and skills. Security findings and actions show up where the cloud work is, rather than waiting for someone to switch contexts to a separate tool.

- Triage and correlation done in parallel.

Traditional cloud security relies on a human reading findings serially: open the alert, investigate, decide what to do, move to the next one. A headless architecture lets agents work on multiple findings at once. They correlate signals across runtime, posture, identity, and vulnerability data without the bottleneck of a single set of human eyes.

- Procedural knowledge that scales beyond team size.

When cloud security workflows live as skills, the expertise to run them stops being trapped in the heads of senior engineers. A skill for vulnerability triage encodes what an experienced engineer would do. Anyone on the team can invoke it. New environments, new tools, and new team members can all draw on the same procedural knowledge.

- A faster path from detection to remediation.

The agent investigates the finding, drafts a remediation, and surfaces it for approval. No human has to coordinate the steps. The time from finding to fix shrinks.

The common thread: each of these depends on an architecture designed for agent consumption from the ground up. Headless cloud security removes the assumption that a human has to be in every loop.

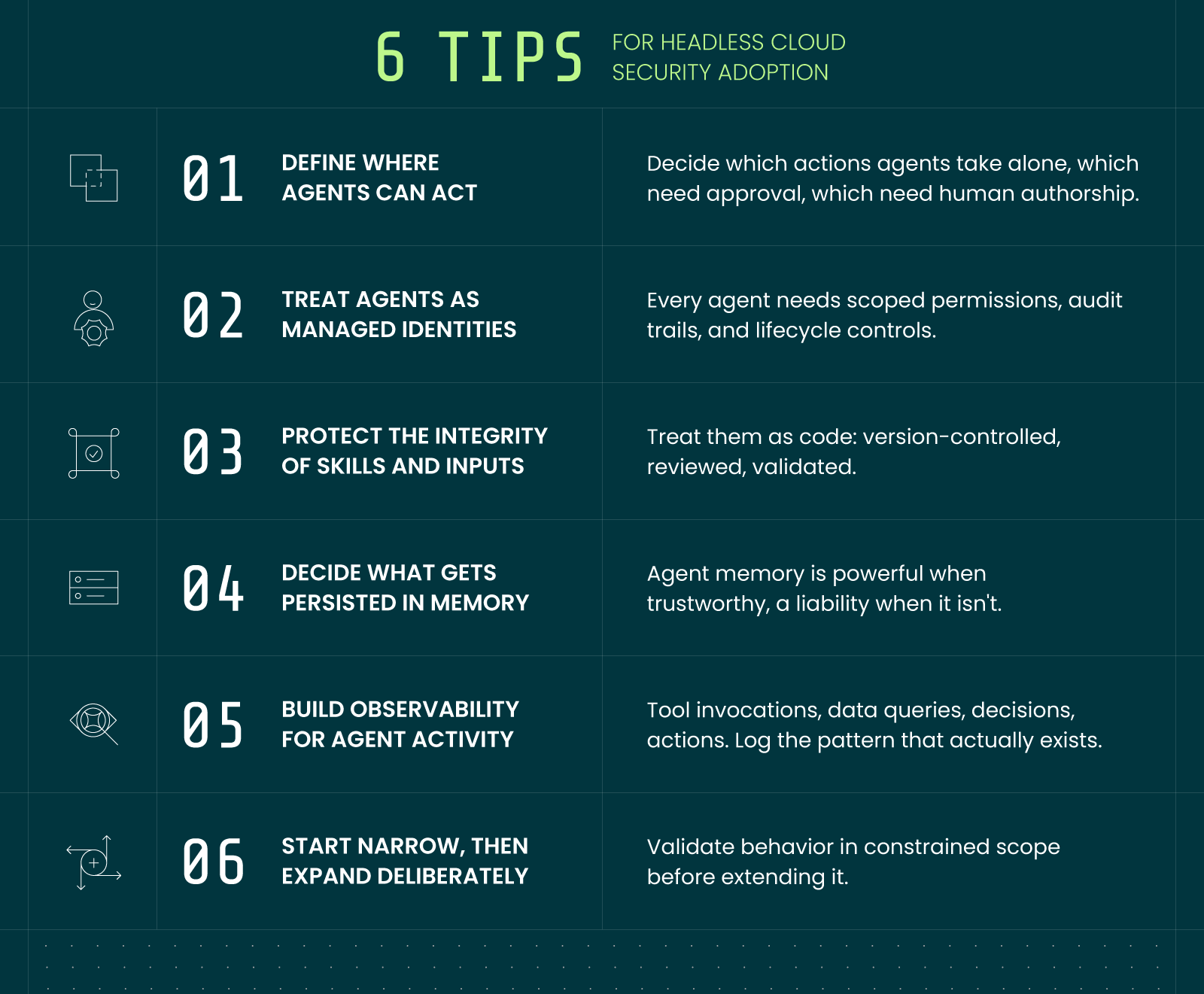

6 considerations for adopting headless cloud security

Adopting headless cloud security is an architectural shift. It works best when teams plan for it deliberately.

The considerations below cover the decisions worth making early:

In short:

Headless cloud security is most successful when the architecture and the operational discipline develop together. The architecture makes new things possible. The discipline is what makes the new things sustainable.

How does headless cloud security relate to agentic security?

It's worth making a distinction between agentic security and headless cloud security. They’re connected, but not the same thing.

Headless cloud security is architectural. It describes a cloud security platform with data and capabilities exposed through programmable interfaces rather than a dashboard.

Agentic security is operational. It describes AI agents reasoning over data, executing workflows, and taking action.

In other words: Headless describes the platform. Agentic describes the operator.

How the shift to headless changes security work

Headless cloud security changes what people spend their time on.

For security analysts, the shift is from operator to orchestrator.

Agents handle the first pass on alerts. The analyst defines what good investigation looks like. They review what agents produce. They step in on cases that require judgment.

For engineering and platform teams, the shift is from working around security to working with it.

Security findings reach the team in the environments they already use. Through the same coding agents they're already operating against. No more context-switching into a separate tool.

For CISOs, the shift is from managing alert volume to managing decisions.

The question changes from "did the team triage everything?" to "are agents acting within the policies we set, and are humans approving the right things?"

The key takeaway: less time operating tools. More time directing them.

%201.svg)