Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Artificial intelligence is trendy, and it seems like it’s everything tech companies talk about.

It also seems like it’s something completely new.

However, AI has been around as an academic discipline since 1956, and it was already beating chess masters by the 90s. What we understand as AI today is generative AI, a technological breakthrough that can solve general-purpose problems rather than specific ones. Nevertheless, generative AI is only a drop in the ocean of artificial intelligence, and it’s not a silver bullet.

This article was written from the knowledge of multiple AI and security experts across Sysdig. From them, you will get an overview of the AI landscape, evaluate some cases where AI and security go hand in hand, and discover how you can create your own neural network with just a spreadsheet.

This article is part of a series on AI and security:

AI used to be complex algorithms

By Crystal Morin, Senior Cybersecurity Strategist

We consider artificial intelligence any complex calculation that performs a task simulating human intelligence.

Under this definition, some bulky spreadsheets could be considered artificial intelligence, and I find it wonderfully demystifying. We’ll get back to this thought later.

On a more serious note, we can find early AIs in video games. The algorithm that moves enemies across the screen, finds paths to positions, and adds personality is artificial intelligence.

By February 1997, IBM’s Deep Blue managed to beat Garry Kasparov, a reigning world champion, in some chess games. Achieving this was more nuanced than simply querying a database of all possible outcomes and choosing the best one. While Deep Blue evaluated 200 million chess positions per second, it also considered other parameters, such as how important it was to keep the king in a safe position.

Deep Blue heralded an era of complex algorithms that are still used in video games, in manufacturing to anticipate production errors, or in logistics to plan transport routes.

AI is now based on statistics: Fuzzy logic and Bayesian math

By Alejandro Villanueva, Sr. Staff Product Manager

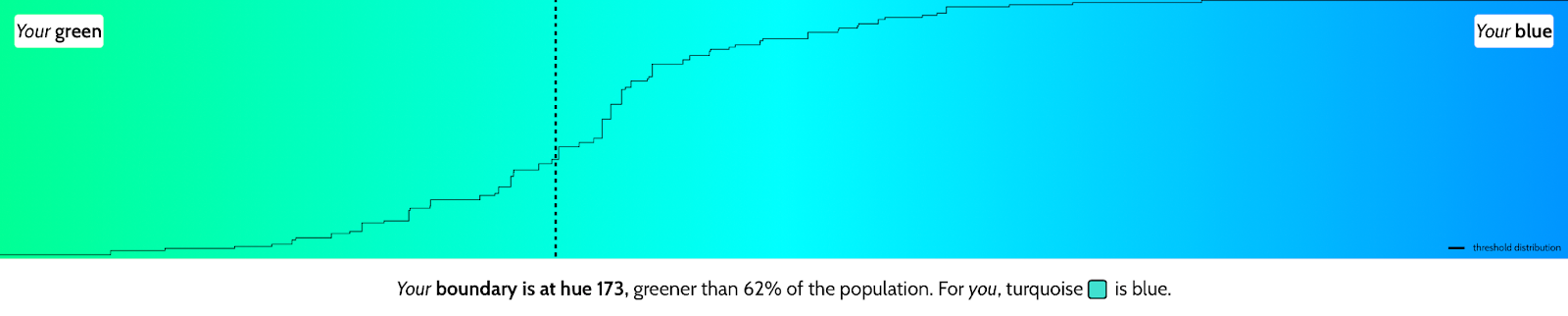

Our brain works with fuzzy logic rather than complex algorithms. Life is not black and white, 0 or 1, to our brain. For us, the inputs and outputs are unclear.

We cannot even agree on what green and blue are. Check out “Is My Blue Your Blue” as an indicator. I consider colors bluer than the rest of the population:

We don’t care if what we see is a tiger. We’ll run first, and we’ll re-evaluate once we are safe. To survive, we need to be good at learning patterns and identifying outliers.

Computers can replicate this fuzziness using statistics, and Bayesian programming was among the first techniques for that.

Statistics in cybersecurity

By Miguel Hernández, Sr. Threat Research Engineer

For example, a Bayesian spam filter learns the probable distribution of words, or a combination of words, present in junk mail. When given a completely new email, the filter scores the probability that it is spam.

Security tools like SIEMs and antivirus software use statistics to profile a system’s typical behavior and then identify abnormal behavior. These profiles can be based on the list of processes to flag a malicious binary; on metrics to detect resource usage spikes; or on resource access to highlight suspicious network connections or file access.

While early security technologies relied on relatively simple statistical models, current solutions increasingly use neural networks to model complex, high-dimensional data. This evolution enables more accurate detection of previously unseen threats and demonstrates how statistical learning remains the foundation of AI-driven cybersecurity.

The curse of AI’s moving target

By Mateo Burillo, Product Manager

Deep Blue was a huge milestone back in the day; it was a wake-up call for many people. Suddenly, the Isaac Asimov books and movies like RoboCop were coming true.

However, reality soon followed.

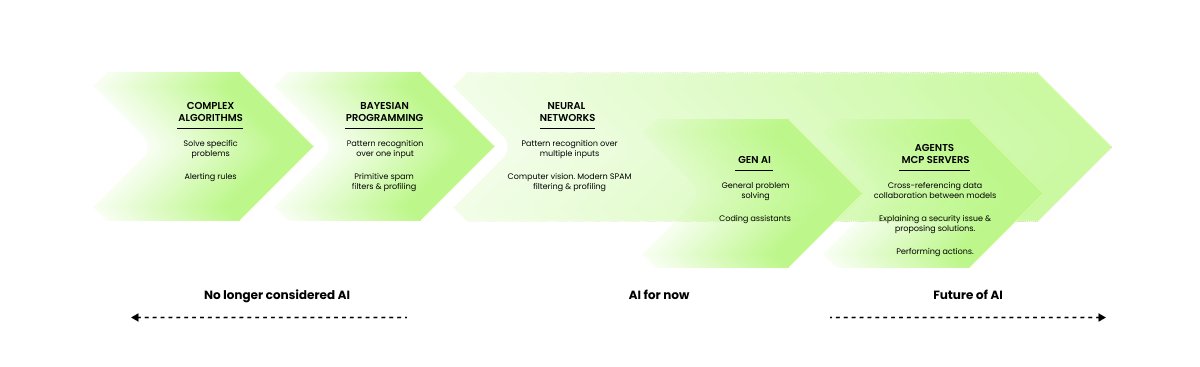

We now see how complex algorithms and Bayesian programming are too simple and can only be used to solve concrete problems.

So we stopped considering them artificial intelligence, at least academically.

This is AI’s curse: the more we understand our brain, the more computing advances, the more strict we are in labeling something as AI.

So, where are we now?

It’s all about neural networks

By Víctor Jiménez, Content Contributor.

If you want to succeed at simulating human thinking, you have to take some inspiration from biology.

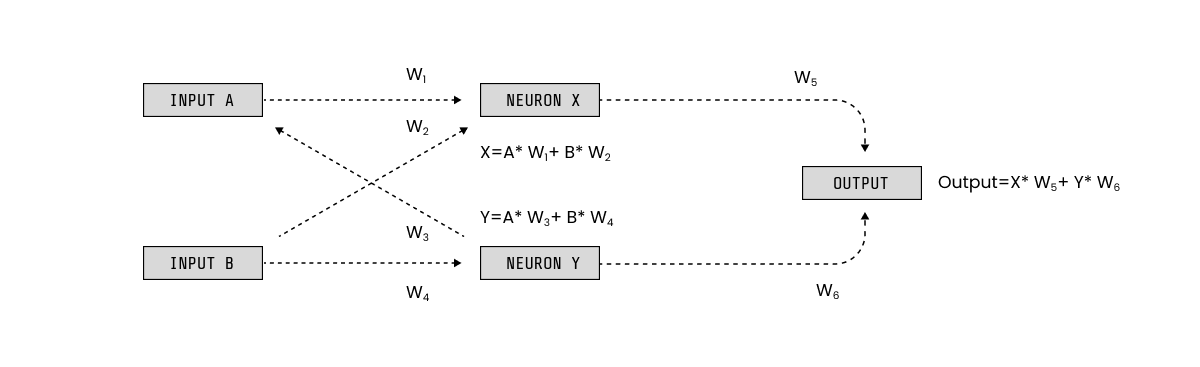

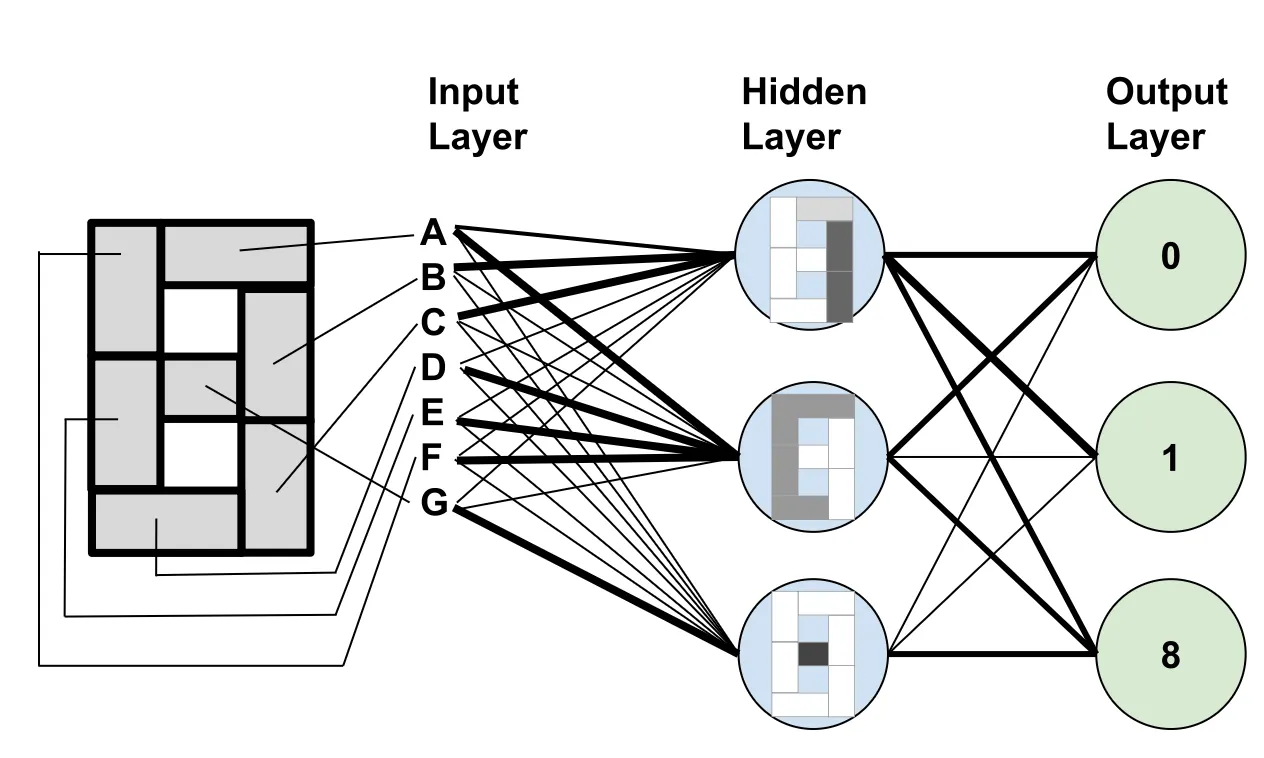

For example, a neural network uses math to model the way neurons interconnect in our brains:

- They are composed of nodes organized in layers.

- The value of each node is a weighted sum of the values from the nodes on the previous layer.

- The first layer is formed by the network's inputs, and the last layer represents the outputs.

It really is as simple as a bunch of additions and multiplications. But it’s such an abstract concept that it’s not easy to see how to put it into practice.

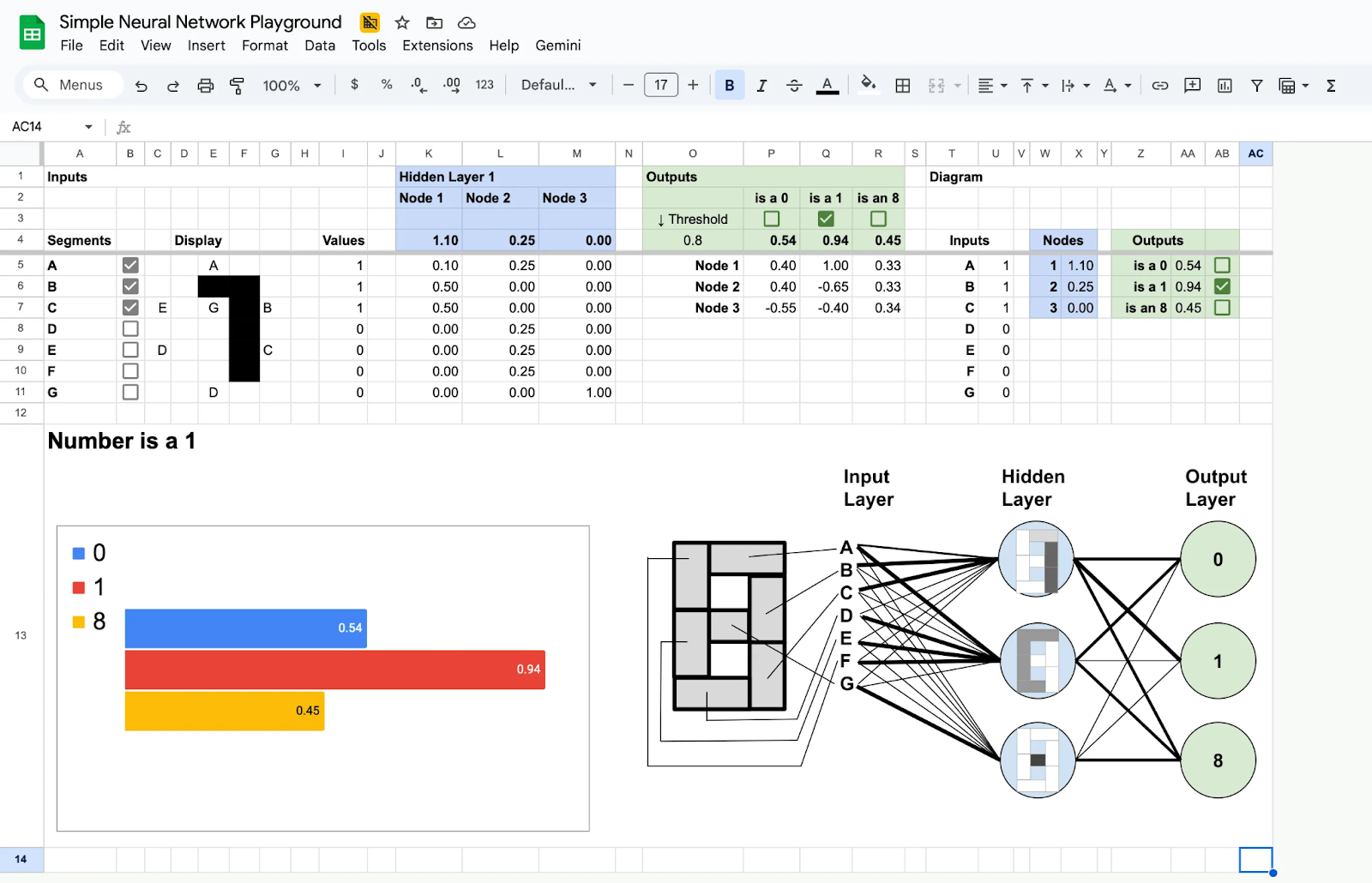

So, I prepared a very simple neural network that can detect the digits 0, 1, and 8 on a seven-segment display. To demonstrate how simple all this math is, I did it using a simple spreadsheet that you can download and play around with.

Here is a quick guide so you can inspect the spreadsheet at your own pace:

- The inputs, A to G, are 0 or 1 values indicating whether a given segment on the display is illuminated.

- The hidden layer is used to identify specific features:

- The first node detects a stick by assigning weights to segments A, B, and C, while ignoring the remaining inputs.

- The second node detects the remaining peripheral segments.

- The third node detects the middle dash.

- The output combines these features to identify what number is showing:

- It’s a Zero if it contains the stick and the peripheral segments, but not the dash.

Again, it doesn’t get much more complicated than these additions and multiplications (kind of). The main difference from a proper neural network is the volume of nodes, which can be in the millions.

Note: This math (matrix multiplication and addition) is the same as that used in 3D graphics. That’s why graphic cards are also used for AI computing.

If you want to see a more realistic example, check out this neural network simulator that can detect handwritten numbers. There are plenty of tutorials on building a neural network to classify handwritten numbers and training it on the MNIST original dataset.

Wait, train it?

Machine learning: Training neural networks

By Emanuela Zaccone, AI Staff Product Manager

This structure of nodes and weights is called a neural model. The model we used for our digit detector was relatively simple, and we could manually assign the weights.

However, how do you build a model with billions of nodes?

Well, you train it.

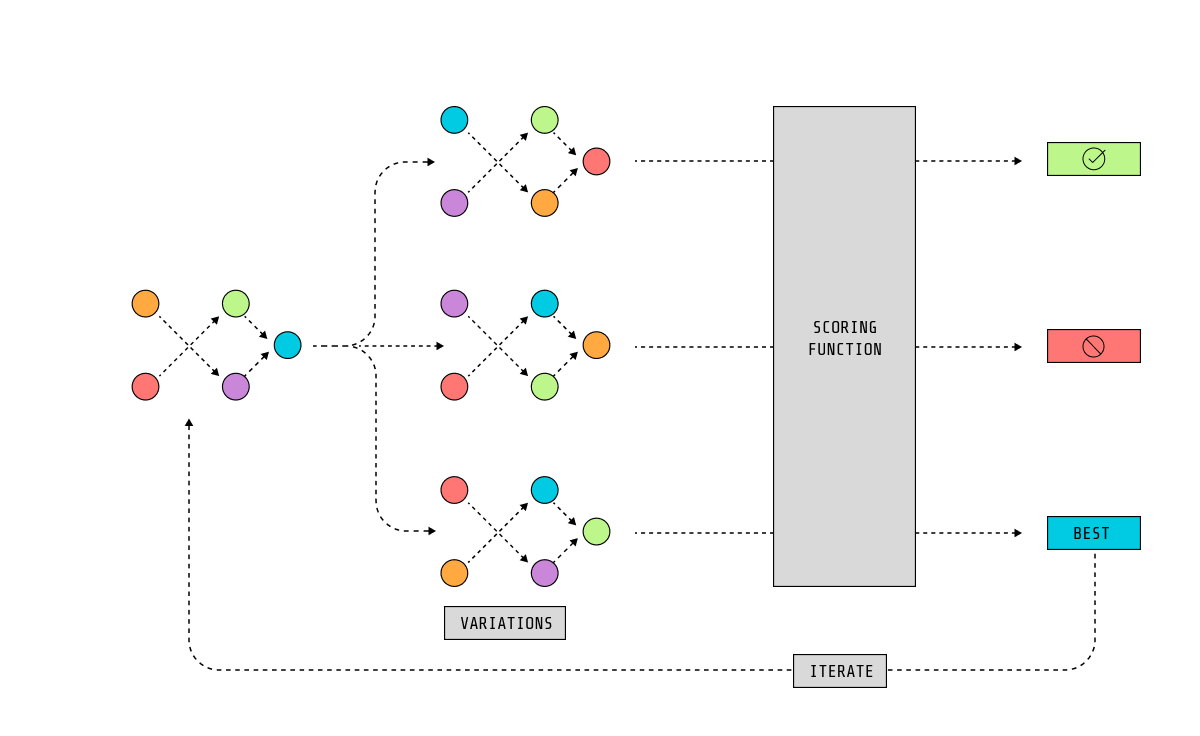

One way to do it is with genetic algorithms:

- Start with a network structure.

- Define a scoring metric to evaluate the model’s performance. We’ll call that the fitness function.

- Provide some random weights and run a simulation.

- Use the fitness function to pick the most successful models from this generation.

- Vary the node values slightly and run another simulation.

- Pick the most successful ones, vary slightly, simulate again, and repeat hundreds of times.

If you are lucky, after several generations, you’ll end up with a model that can perform the task you are asking it to do. This system is inspired by how natural selection chooses the fittest organisms and represents the trial-and-error process we humans use to learn.

Note that this training method is not the most optimal, so it’s not exactly how AI models are trained. In any case, take it as an example of a way a computer can learn. Genetic algorithms also make for very entertaining content.

Until recently, the most significant area to which machine learning has been applied is computer vision, where AI models can recognize objects in images.

Robots and self-driving cars use computer vision to navigate; your phone’s camera uses it to adjust settings based on what you are photographing; and factories can detect failures before they leave the production line.

It also plays a significant role in modern medicine as an aid in diagnostics. For example, it helps detect autoimmune diseases from photos of your nails.

Neural networks are being used everywhere, from reducing noise in your microphone so your video calls sound better, to aiding in industrial design to create better rocket engines.

Neural networks in cybersecurity

By Crystal Morin, Senior Cybersecurity Strategist

As we mentioned earlier, modern spam filters and profilers use neural networks.

The use of neural networks allows profilers to process data from several sources. For example, banks often feed all your activity to a neural network to flag suspicious activity.

However, it’s been challenging to build accurate, single-purpose neural networks for cybersecurity. Technology changes too often, and it’s almost impossible to debug a model.

That’s why security applications for these models are mostly limited to computer vision and biometrics, such as fingerprint sensors, facial recognition, and security cameras.

A new cybersecurity area born with neural networks is adversarial training. It studies dark AI attacks on models like evasion or data poisoning, and the defenses against such attacks. In 2017 these kinds of attacks became popular when security researchers tricked self-driving cars into speeding up by modifying traffic signals.

Generative AI: LLMs and diffusion

By Manuel Boira, Senior Solutions Architect

But the current AI hype has nothing to do with the above. What everyone knows about artificial intelligence nowadays is generative AI: algorithms capable of generating content, like text (with large language models) or images (with diffusion models).

These are still neural networks at their core, with some added bells and whistles.

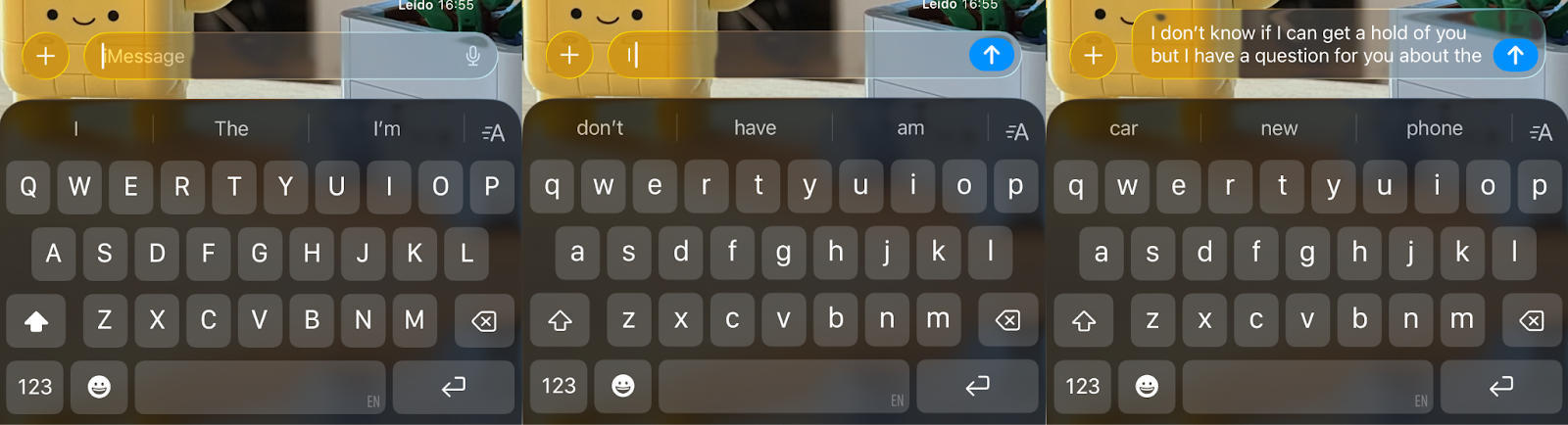

Creating a large language model

To simplify, a large language model (LLM) starts as a neural network trained to predict the next word in a text. Similar to the predictive text in your phone’s keyboard.

It’s trained on as much written text as possible, and that text is tagged with some context so the AI can understand the difference between languages and between formal and technical expressions.

At this stage, the AI is scored positively if it can reproduce existing texts.

But as we learned from the “write a paragraph with predictive text only” experiment, this leads to an AI that generates only nonsense.

In a second training round, we refine the model.

To that end, we use a different LLM to score the generated text and ensure it aligns with our guardrails and biases. Errors at this stage can lead to surprising results, such as a version of GPT-2 becoming lewd due to an error in the scoring function.

If the training is done properly, we get a large language model.

ChatGPT, Copilot, Gemini, etc., are all LLMs, statistical models of text slightly more intelligent than predictive text. That’s why they excel at correcting grammar or rewording texts. That’s also why they are good at programming; after all, programming languages are also driven by grammar rules over a finite vocabulary.

However, they struggle with anything related to logic and reasoning, like doing math or identifying fake news. Luckily for us, human reasoning is also grounded in experience and emotion, so language alone is not enough.

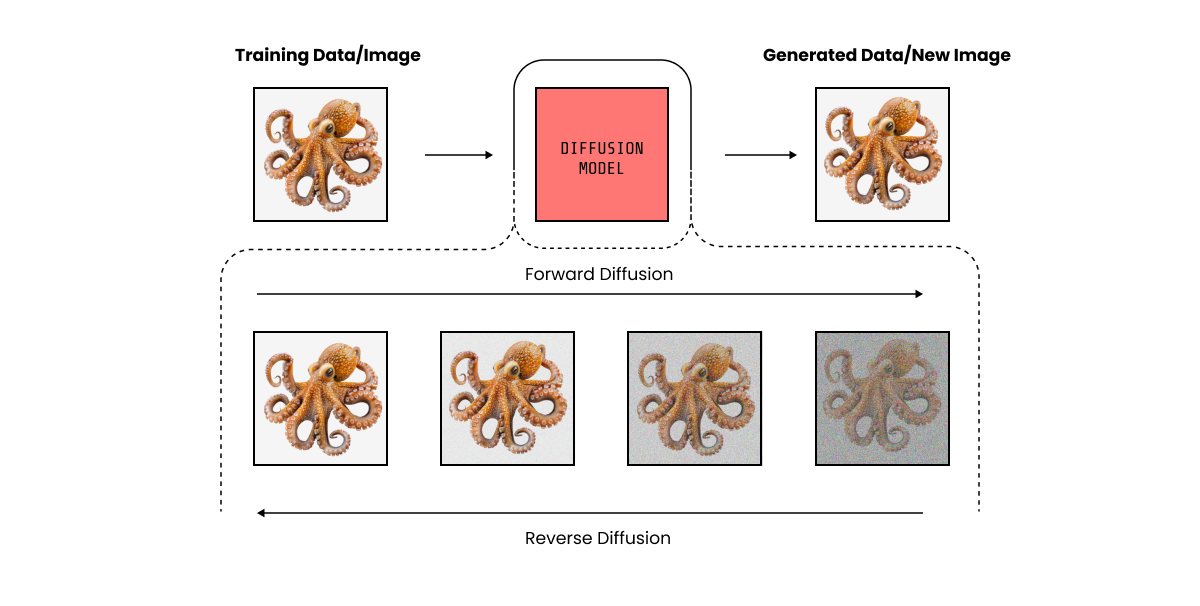

Image generation with diffusion

Generating images is a bit different.

Diffusion models are an evolution of computer vision models that are trained on images with increasing levels of noise.

To generate an image, we start with a noise pattern. Then the model shifts the pixels around to make the image look a little more like what we asked for. This process is repeated several times until the image has no noise.

Diffusion models excel at composition, making them ideal for brainstorming while creating concept art.

However, they are just “pixel shakers”. Like LLMs, they lack experience and feelings, so the images they create often feel uncanny, lifeless, or all look the same.

Diffusion models struggle with video as well, having a hard time maintaining object permanence, understanding the laws of physics, and keeping consistency over time. The first models tried to hide uncanny movements by producing slow-motion videos. They are better now; its likely the training data is now tagged with temporal meta information like motion vectors. Consistency over time is still an issue; not many tools let you create videos longer than 10 seconds, as things start looking like a Doctor Strange movie.

Gen AI in cybersecurity

By Miguel Hernández, Sr. Threat Research Engineer

LLMs have democratized security.

A few years ago, you needed to be a security expert to understand the severity of a security alert, then investigate a solution on the internet, and then implement it.

Now, a chatbot will guide you. First, it looks at your infrastructure’s context to present you with the resources affected, then gathers mitigation steps and helps you implement them. This means that DevOps engineers can also use security tools effectively, and security engineers have extra time to focus on more complex tasks.

This works both ways; security engineers can also use LLMs to investigate where an alert originates in the application’s source code and create more detailed issues for the development team. We saw an example of this in Investigating security issues with ChatGPT and the GitHub MCP server.

On the other hand, Gen AI in cybersecurity also made it easier for cybercriminals. Our Threat Research Team recently detected an attack with indicators that suggest the threat actor leveraged large language models throughout the operation to automate cloud services discovery, generate malicious code, and make real-time decisions. Read more in AI-assisted cloud intrusion achieves admin access in 8 minutes.

These new threats are more unpredictable, which raises the importance of runtime security as a safety net.

What is the hype (and the controversy) about?

By Álvaro Iradier, Technical Product Manager

AI, including LLMs, has been around for decades. So, why all the hype now?

What has changed the game is the increase in computing power.

A few years ago, models were limited to perform specific tasks. Models can now scale to gigabytes of memory, allowing LLMs to solve a wide variety of problems. We are having another wake-up call, as we did in ‘97 when Deep Blue beat Kasparov, and we are thinking that maybe it is now when science fiction becomes reality.

However, AI still hasn’t solved some essential challenges, and that is raising concerns: There are still critical edge cases where AI cannot yet replace humans, AI computing is resource-intensive, and there are ethical questions about how AI software is developed.

As the industry chases AI hype, we must maintain a sceptical mindset, and also separate transformative use cases from those that don’t reduce cognitive burden.

What’s next?

By Marla Rosner, Content Marketing Manager.

So, what will happen next?

We are at a stage where everyone is testing the limits of this new technology. While some people are proposing game-changing applications for AI, most use cases remain novelties.

As things currently stand, AI has democratized some technologies, like coding and security tools. However, they still need a human with real-world experience to guide them.

When LLMs go unchecked, they just create noise. For curl, an open-source command-line tool, things got so out of hand that they have stopped rewarding bug bounty reports and have tweaked the submission process. Real-world experience is still needed.

And while vibe coding has enabled anyone to create a successful project, it has also created a demand for real software engineers to fix glaring scalability and security issues.

At the same time, AI is still evolving.

We are seeing AI chatbots increasingly behave like agents, in what is called agentic AI. In this paradign, AIs use MCP servers to share context across tools, correlate information, and collaborate with other AIs to perform complex tasks.

LLMs are not getting smarter as quickly anymore, but they are able to manage more information and are capable of taking action. This is leading to a new era of automation, where experienced humans are more important than ever.

Read more in AI is still a workload.

Conclusion

Generative AI is just the new kid on the block, grabbing everyone’s attention. But old-school AI still lives in our spam filters, biometrics, profiling, and computer vision.

While Gen AI has democratized many technologies, humans are still needed.

That’s why we’ll see a more surgical approach to Gen AI in the future. Positioned more like a subtle assistant, rather than a human replacement.

If you liked this article, check the other one in the series: